Posts Tagged ‘hosting’

How do I upload my webfiles?

Sep 09 29

There are two main ways to upload your website files to your web hosting server:

FTP (File Transfer Protocol)

The most common way to upload your files is through FTP. This requires an FTP program. There are many FTP programs out there but we would recommend Filezilla as it is very user friendly. You can download Filezilla free from the following address:

http://filezilla-project.org/

and click on ‘Download Filezilla client’

Once you have downloaded this, open up Filezilla and click on the top left icon (computer screen) and click ‘New Site’ and enter ****domain*** or any description as this is just the label for the site, then enter the following details into the boxes:

Host = Given in your web hosting welcome email

Port = 21

Servertype = FTP

LogonType = Change the drop down list to Normal

Username = Given in your web hosting welcome email

Password = Given in your web hosting welcome email

Then click Connect, and double click on the ‘httpdocs’ folder to enter

your hosting webspace.

Once you are connected you will have 2 split windows, the left hand side is the files on your computer, the right hand side is your web space, in order to upload your files you need to either drag and drop from left to right, or double click on your left hand files to upload to the right. You will notice the transfer progress on the bottom of the screen. If all is well Filezilla should display the files you have uploaded on the right hand screen and your website should now be visible in your internet browser window.

Using the Plesk File Manager to upload your files.

This method comes on any package hosted on a Plesk server

You will be given your control panel user details in your welcome email. Once logged into the control panel you will see this icon. Click on this and you will again be connected to your server’s web directories and again click on ‘httpdocs’ to manage your webfiles.

How to use the File Manager

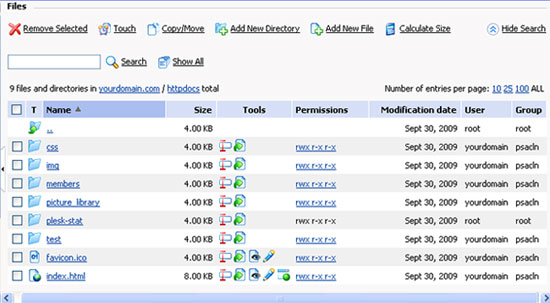

Once you click into your httpdocs directory your webfiles will appear as in the image below:

Other commands you can do from here would be to create or delete folders and files, change file and folder permissions, edit existing files and rename files and folder. Here are a few more helpful tips to use with Plesk’s File Manager:

- To upload a file to the current directory, click add new file, then either browse your computer or ‘create new’ file and specify its location. Click OK.

- To create a folder that will be located in the current directory, click ‘Add New Directory’, then type in the directory name in the Directory name field and click OK.

- To edit an existing file source, click the Edit File icon (pencil). The File Manager’s editor window will open, allowing you to edit the file source. When you have finished with your changes, click ‘Save’ to save the file then OK to save the file and return to the File Manager panel.

- To remove a file or directory, select the corresponding checkbox, and click Remove Selected.

- To rename a directory or file, click on the Rename icon (small rectangle). A new page will open allowing you to rename the selected file or directory. Type in a new name and click OK.

- To change permissions for a file or directory, click on the permissions icon (which will look something like rw- r– r–) the permissions settings page will open, allowing you to set the required permissions. Select the correct settings for the file then click OK to submit.

Robots.txt file

Sep 09 22

Have you heard of a robots.txt file? Do you have a robots.txt file on your website?  Search engine spiders and similar robots will look for a robots.txt file, located in your main web directory. It’s very simple and can help with your site’s ranking in the search engines.

Search engine spiders and similar robots will look for a robots.txt file, located in your main web directory. It’s very simple and can help with your site’s ranking in the search engines.

What is a robots.txt file?

A robots.txt file is a small text file that you place in the root directory of your web site. This file is used to fence off robots from sections of your web site, so they won’t read files in those areas. Search engines often call these spiders and send them out to look for pages to include in their search results.

How do I create a robots.txt file?

Using a text editor such as Notepad, start with the following line:

User-agent: *

This specifies the robot we are referring to. The asterisk addresses all of them. You can be more specific by entering the bot name but in most cases you would use the asterisk.

The next line tells the robot which parts of your website to omit in their crawl:

User-agent: *

Disallow: /cgi-bin/

This would fence off any path on your website starting with the string /cgi-bin./

Multiple paths can be added using additional disallow lines:

User-agent: *

Disallow: /cgi-bin/

Disallow: /private/

Disallow: /test/

This robots.txt file instructs all robots that any files in directories /cgi-bin/, /private/ and /test/ are off limits.

If you want search engines to index everything in your site, you don’t need a robots.txt file (not even an empty one).

Using meta tags to block access to your site.

You can also use Meta tags to the same effect.

To prevent search engines from indexing a page on your site, place the following meta tag into the header of the page:

<meta name=”robots” content=”noindex”>

Not all robots may support the robots.txt directive or the Meta tag, so it is advisable to use both.

You can learn more about robots.txt files here.